You are not AI Native, yet.

tl;dr: If you’re not ready for a cultural transformation, you may just stay AI First.

Last month we spent over 60 hours on company workshops. The new topic in our executive track: how we can be AI Native? The uncomfortable truth: executives don’t know what AI Native means for them.

Usually teams have a soft defined vision, and CEOs delegate the task to the executive they believe should drive the transformation. Or even worse, they hire for it.

“We don’t have a vision on what AI Native means.”

We kept hearing a version of this with technical and non-technical teams.

Good intentions combined with lack of organizational knowledge.

In all cases, they do so before understanding their current AI adoption landscape, challenges that their orgs are facing and the cultural blockers for this required metamorphosis.

Winning with AI requires much more than defining KPIs, using new providers, or implementing new features. It requires an encompassing strategy that takes your team to fundamentally shift their working practices.

Here our key learnings from those early conversations.

First: 4 ways things always go wrong.

Look at your organization. Everyone is already AI Aware. They have used AI in their personal lives, they are using providers that rely heavily on it, and they are likely relying on it heavily for their day to day busy work. That’s how it starts.

AI Breadlines

“Only 30% of the people will have access to Claude. We don’t think HR would benefit from it.” - HR Leader.

Leaders don’t grasp the power of AI, unintentionally enforce the loop.

In many organizations, the most common blocker is access to AI. We have seen three ways that this happens:

HR or Finance teams that are budget conscious limit rollouts,

Legal and compliance processes lengthy reviews delay adoption,

Even when the above is not true, time is a restrictive factor.

Teams cannot afford to stop & learn when they are demanded to accelerate.

When you have lines of overworked, AI hungry, brilliant employees, trapped in the company’s own protective systems. Progress will stall.

The knee-jerk answer from Executive teams is to act fast and let everyone in the organization (that has the time for it, which could be a problem on its own), try their own thing…

AI Obscurity

A short-cut strategy to solve for AI Breadlines will take you directly to the dark ages.

3 months down the road, Alex in Marketing Growth has Claude Managed Agents running all her experiments. Tony, your superstar HRBP, is pulling business and performance reports with Open Code. And Anum in sales is handling all her comms with a custom setup of ChatGPT workspaces. You are so proud. Every week, during your all hands someone new presents what they are doing.

A few months later, employees leave, move on to new projects, or are entrenched in their private solutions. One day, the alarm goes off when your CFO is trying to understand the ballooning AI spending. She looks at you with dismay, “nobody knows”, she says. This is what is going on:

You lost organizational knowledge.

Instead, you have a myriad of AI providers for each tiny problem. The person that actually decided on that provider doesn’t even remember why they did, which results in…Lack of accountability.

When someone asks ‘how do we handle X’, nobody answers. New joiners try to ask questions to colleagues and they receive bits and pieces of prompts, skills, maybe links to old all-hands videos. Departments start distancing themselves because they don’t understand how each other is working. It becomes a secret craft passed down to the lucky ones.

Motivated leaders will decide to start their own initiatives.

AI Fragmentation

Now every leader wants to have agency to define how their area works. They don’t have visibility into what others are doing, nor the time to wait, nor guidance from their leaders. Hence, they start devising their own strategies in isolation, creating dreaded silos.

Eventually you notice that:

Quality drops sensibly in your business.

Suddenly, you are exposed to anyone that doesn’t understand the pitfalls of AI. That means errors will happen, some small, some big. It’s difficult to keep up with AI’s best practices when this is a tool for you. Eventually you start seeing…

Eroded trust on AI.

There are 2 stereotypes that appear consistently in every AI chat, the “I know best and you are doing it wrong” (we will save this one for last), and the “we tried it, the technology doesn’t work.” The second camp is a result of the above. Half-baked solutions tend to lead to poor performance. If you are here, it means Alex, Tony, Anum, et al. are now running their show without even talking with the rest of the company. They were tired of waiting for support from an expert, or a lengthy approval process, or simply you kept on pushing them.

To sum it up, everyone is using AI, everyone has their own agents, nobody knows how everyone else works. This is the full isolation picture after an obscurity period. It’s the stage before the revolution. You can smell it in every conversation…

“I know best and you are doing it wrong.”

AI Brawls

One day, the CEO walks into the exec meeting and asks the most basic question: what’s our ROI on AI? And you land here.

Product says Tech goes slow, Tech says we are choosing the wrong projects.

Sales and Marketing are fighting over ownership from outbound agents.

Marketing and Product try to define overlapping growth experiments.

The list goes on.

Everyone is pushing for a different set of solutions, each says they’ve seen it working in their friend’s startup, the true AI way, and they want everything to follow their process. Or worse, this just keeps happening in the shadows.

What’s the worst that could happen?

Everyone says ‘yes’ and you end up spending thousands on a busy AI slop. Humans like to avoid conflict, and now grunt work is “free”.

Second: It’s ok to be first, you don’t need to be native.

“We want to be AI Native!”

“Sure, how is it different from AI First?”

“<awkward silence>”

This one is the most fun conversation I had in the past month. Truth be told, not everyone needs or is willing to pay the price of becoming AI Native. What everyone needs to understand is what AI Native means, though.

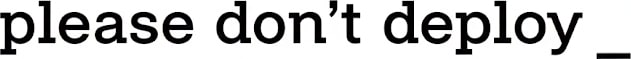

We see companies move through 4 stages:

AI Aware

This is the earliest stage of AI maturity. People know AI exists and matters, and they use it ad hoc.

Key indicators:

+ Usage still relies primarily on intuition.

Opinion, personal experience, and employee political status matter the most. There is no shared knowledge, no canonical skills, and no AI strategy.

+ It’s a discovery stage.

Limited governance, and almost no cross-departmental collaboration. All is dependent on having the right leaders in each team.

+ Adoption is being driven by peer pressure.

Typically there’s little understanding of the actual business impact the new practices have. A person shares their experiences, people copy that, and they always find that it wasn’t as great as they imagined.

You know you are here when leaders talk about AI Native, but cannot describe how that would look like, or what success means for the company.

AI Augmented

The company decides to invest on shared AI infrastructure, deliberately, increasing capabilities. Teams and leaders begin investing in tools, processes, and personnel. Everyone recognizes the importance of a well defined AI strategy.

Key indicators:

+ AI literacy starts to appear.

Training, shared resources, playbooks, guidelines and policies start taking shape. Onboarding includes understanding when and how the team uses AI. Departures stop having an impact on AI knowledge.

+ Provider debates start to creep in.

Departments and teams start to discuss which providers are the chosen one. Should we have everything in Anthropic or OpenAI? Maybe Microsoft or Google. Governance starts being mentioned, and people start thinking that cross-provider integrations are needed.

+ Your first truly agentic flow appears.

With tech or product leading the wave. A well structured process with sequences of prompts and agents collaborating to produce an output. Gut and politics still carry significant weight, dominated by uncertainty.

AI First

All activities are handled by AI first. And now every employee feels they have been leveled up. They are supervisors instead of ICs. Editors instead of writers. Their days are consumed by reviewing their agents’ work, and fine-tuning responses.

Now the organization problems are different, nobody is truly worried about adoption or acceleration, they are worried about:

Outcome: Why are we even doing this?

Resources: optimizing costs and time wasted.

Brain fry: people seem more tired than usual, and the quality drops.

Until this point, your company has been operating faster, but processes are the same, maybe with minimal tweaks. The real bottleneck becomes coordinating your team, because now it behaves like a company that’s 10x the size.

You have hit your threshold on Organizational Cognitive Load.

The answer to that is to challenge what processes you had in place.

This is when you ‘flip the board’.

Time to become AI Native.

Third: The AI Native metamorphosis

At the end of our last workshop, a manager presented his learnings to the team. A single slide, with three quotes. Highlighted:

“Building multi-agent workflows is surprisingly approachable. You don’t code it, you just explain it.”

→ Tech becomes devex for the whole org.

The new mental model shouldn’t be Tech is a product builder, instead, tech is providing leverage to the rest of the organization. Your CTO should be significantly closer to the COO than to the CPO.

That is the direct consequence of being truly AI native. Building agentic workflows, maintaining them, and having them in the right infrastructure, while using the right models, in a safe and secure manner is as simple as chatting with a peer, you did it. Why?

Because you don’t need an engineer to add a simple feature, or to create an email campaign, or to change how you handle customer success. You need them perfecting a Compounding Intelligence Layer.

We have seen teams invest more than 50% of their time in the early days. Each hour brings a direct benefit to the whole organization in a matter of days. They are relentlessly focused on improving the glue that will make every agentic flow better each time a new model is released.

A few examples: your tech team is transparently incorporating new harnesses, creating a world model of your company, increasing the standards in quality, security, and safety. Unifying the experience of managing these AI systems across the company. Everyone sees the benefits of focusing on the “machine that builds the machine” (MTBTM).

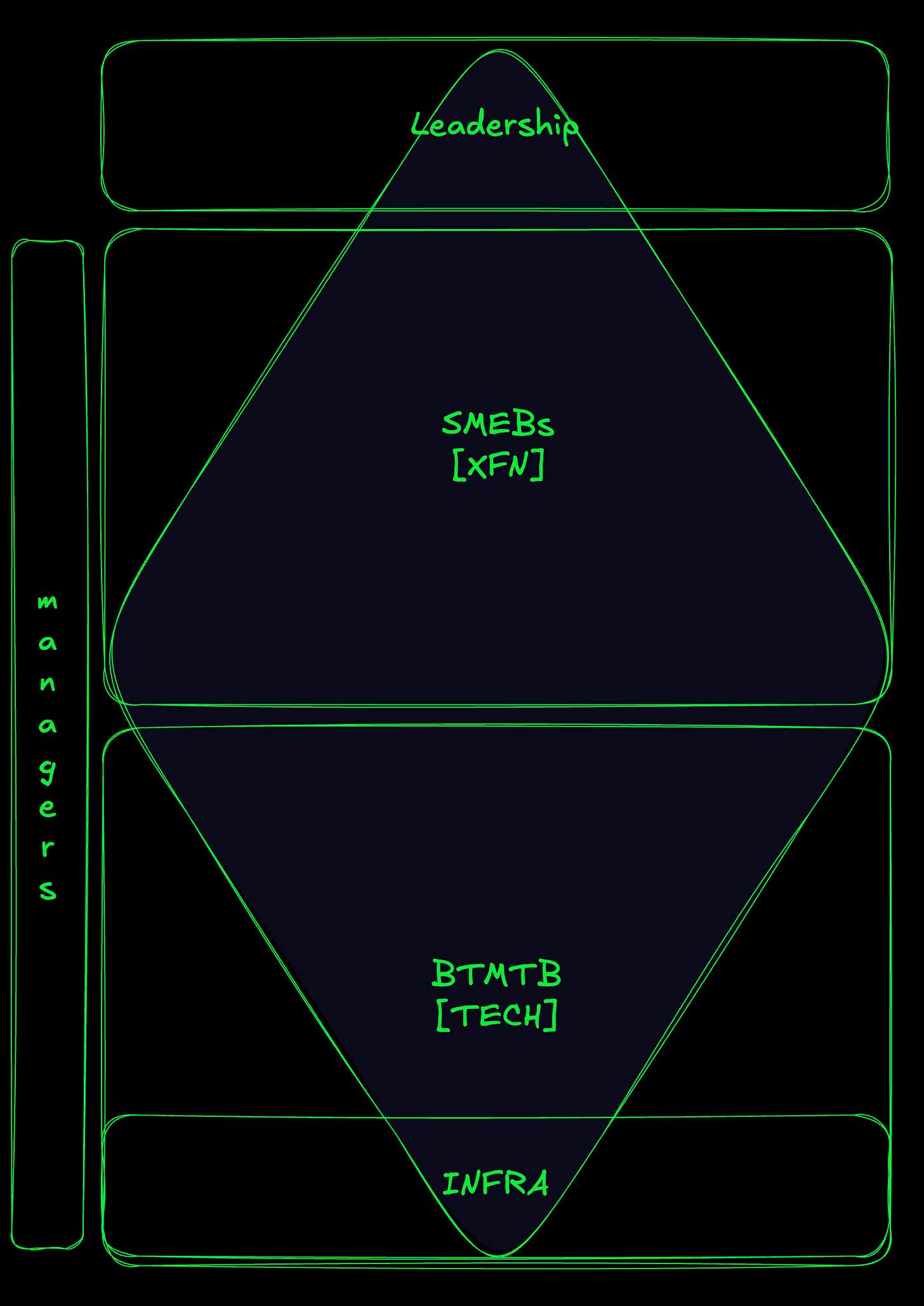

When you reach that state, your team is divided into 3 overarching career paths.

+ Subject matter expert builders (SMEB)

The ones that use AI to better your AI.

The Tech Crew improves the MTBTM, and evangelizes on those new goodies that are coming. They are your Compounding Intelligence Layer cross-functional glue for humans and systems.

Other SMEBs work closely with the Tech Crew, focused on improving operational metrics in different departments. They are experts adapting your AI in areas like customer support, or marketing, or UX, etc. They create departmental leverage.

+ Direct Responsible Individuals (DRI)

Mission driven individuals chasing for a business result. They use all the leverage generated by the above levels to actually transform that raw power into ROI. The limit here is focus, a human cannot focus on several of these at the same time, so your organizational focus will limit the role.

+ Managers as a unavoidable consequence of large teams.

A human cannot advise, mentor, check on, more than ~7 other humans. So you will still need a role like this one if your team is big enough.

As an executive that is going to invest 50%+ of the Tech team in this transition, you need a plan. Your organization needs to radically distance itself from the processes it follows. To do that, you need to change the culture, and implement a new centralized system, so learnings and practices can be spread in the blink of an eye.

Alignment is required at each and every level. And early failure is a given.

The-one-thing

AI Native is the job of a visionary CEO. It’s a top-down, culture, vision, and resources change. Otherwise, go AI First; it’s bottom-up.

“It does not make engineering easier, possibly the opposite… But the potential is huge!”

- A TL in that same workshop.